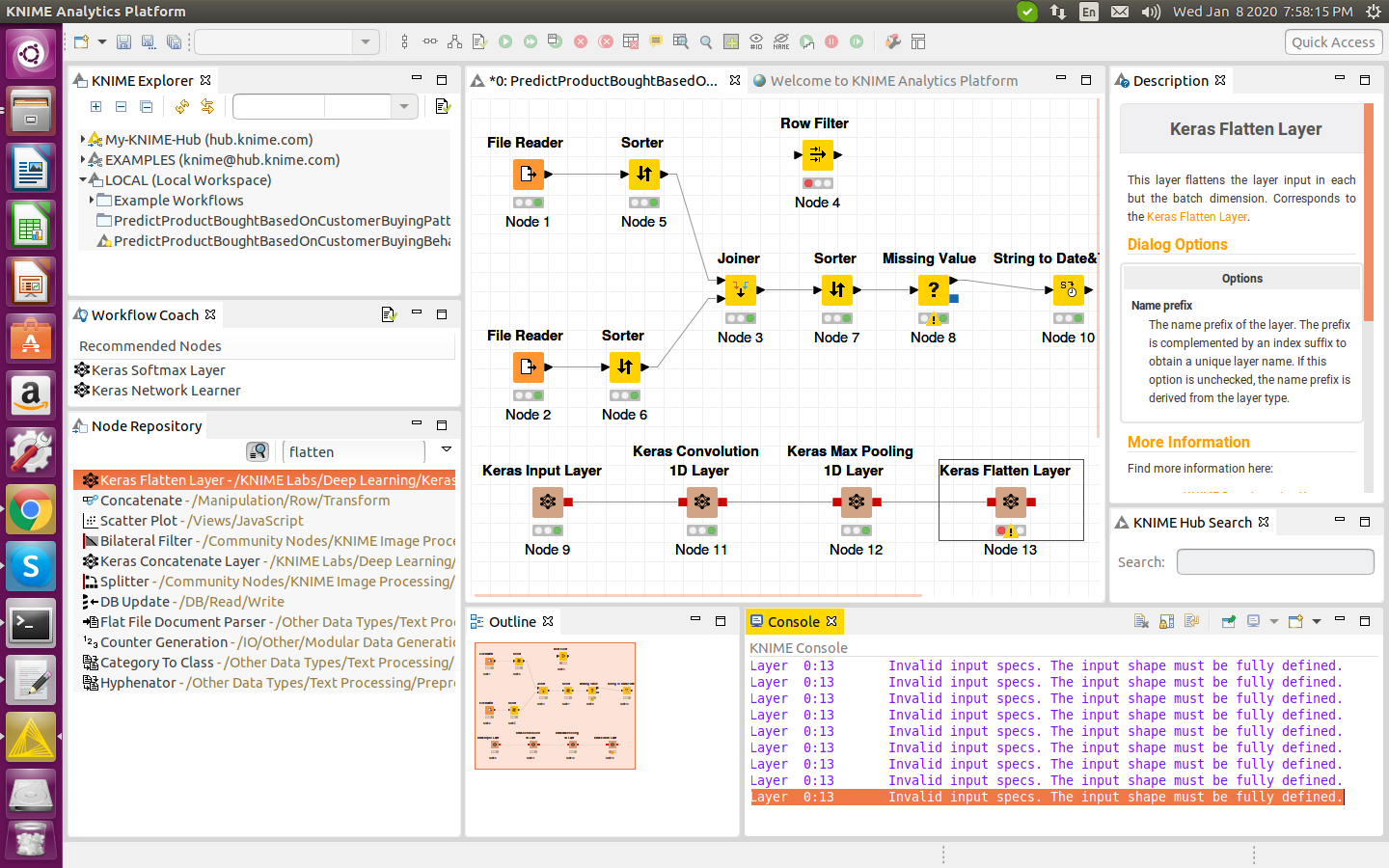

For the inputs to recall, the first dimension means the batch size and the second means the number of input features. In fact, None on that position means any batch size. You should read it (1, 48) or (2, 48) or. The output shape for the Flatten layer as you can read is (None, 48). If we take the original model (with the Flatten layer) created in consideration we can get the following model summary: Layer (type) Output Shape Param #įor this summary the next image will hopefully provide little more sense on the input and output sizes for each layer. This is exactly what the Flatten layer does. So if the output of the first layer is already "flat" and of shape (1, 16), why do I need to further flatten it?įlattening a tensor means to remove all of the dimensions except for one. Then, the second layer takes this as an input, and outputs data of shape (1, 4). So, the output shape of the first layer should be (1, 16). Therefore, the 16 nodes at the output of this first layer are already "flat". Each of these nodes is connected to each of the 3x2 input elements. From my understanding of neural networks, the model.add(Dense(16, input_shape=(3, 2))) function is creating a hidden fully-connected layer, with 16 nodes. However, if I remove the Flatten line, then it prints out that y has shape (1, 3, 4). pile(loss='mean_squared_error', optimizer='SGD') It takes in 2-dimensional data of shape (3, 2), and outputs 1-dimensional data of shape (1, 4): model = Sequential() Below is my code, which is a simple two-layer network. you are continuing to use an already trained model.I am trying to understand the role of the Flatten function in Keras. small/large Model.Īnyway, Transfer learning is just a special case of Neural Network i.e. You can apply this concept to your own model too and test the result/parm count for different cases i.e. Thanks to the dimensionality reduction brought by this layer, there is no need to have several fully connected layers at the top of the CNN (like in AlexNet), and this considerably reduces the number of parameters in the network and limits the risk of overfitting. Moreover, it is a classification task, not localization, so it does not matter where the object is. Indeed, GoogLeNet input images are typically expected to be 224 × 224 pixels, so after 5 max pooling layers, each dividing the height and width by 2, the feature maps are down to 7 × 7. The global average pooling layer outputs the mean of each feature map: this drops any remaining spatial information, which is fine because there was not much spatial information left at that point. So you are reducing the dimension which will eventually reduce the number of parameters when joined with the Dense layer.Įxcerpt from Hands-On Machine Learning by Aurélien Géron Then it is more likely that the information is dispersed across different Feature maps and the different elements of one feature map don't hold much information.

a lot of Pooling) then the map size will become very small e.g. What is the difference if both can be applied?Īlthough the first answer has explained the difference, I will add a few other points. Model = Model(inputs=vgg.input, outputs=prediction)

Prediction = Dense(len(folders), activation='softmax')(x) # our layers - you can add more if you want vgg = VGG16(input_shape=IMAGE_SIZE +, weights='imagenet', include_top=False) Preds=Dense(3,activation='softmax')(x) #final layer with softmax activation X=Dense(512,activation='relu')(x) #dense layer 3 X=Dense(1024,activation='relu')(x) #dense layer 2 X=Dense(1024,activation='relu')(x) #we add dense layers so that the model can learn more complex functions and classify for better results. base_model=MobileNet(weights='imagenet',include_top=False) #imports the mobilenet model and discards the last 1000 neuron layer. I have seen an example where after removing top layer of a vgg16 ,first applied layer was GlobalAveragePooling2D() and then Dense(). In CNN transfer learning, after applying convolution and pooling,is Flatten() layer necessary?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed